AI has reshaped SEO by making content production faster and more scalable than ever before. Marketers can generate full articles in minutes, target new keywords quickly, and expand their reach without increasing workload. But speed alone no longer guarantees results. Search engines now evaluate depth, usefulness, and authenticity, not just keyword presence or publishing frequency.

Humanized AI content solves this shift. It combines AI efficiency with human judgment, clarity, and intent. This approach produces content that answers real questions, earns trust, and performs consistently in search. As SEO continues to evolve, humanizing AI output has become essential for visibility, authority, and sustainable growth.

Why Traditional AI-Generated Content Is Losing SEO Effectiveness

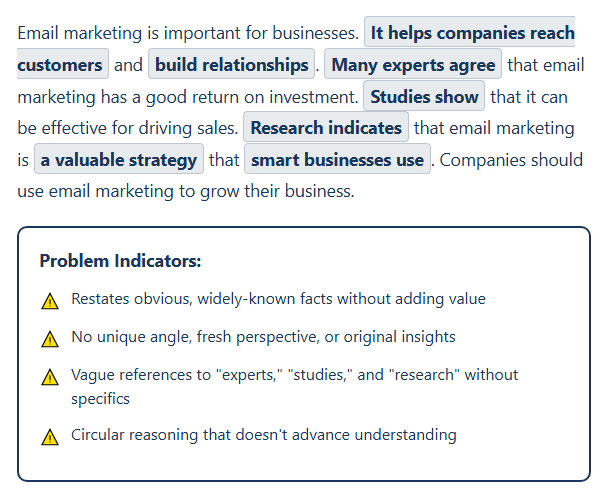

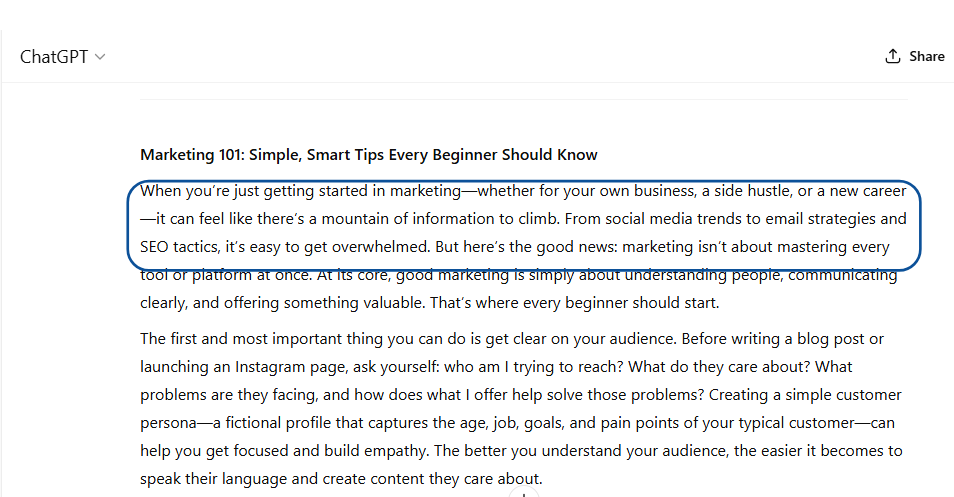

AI made content creation faster, but speed exposed a critical weakness. Many AI-generated articles appear complete on the surface, yet fail to perform in search. They provide information but lack precision, intent, and clarity. Search engines now evaluate how well content serves readers, not just whether it exists. Generic output struggles to compete because it does not demonstrate meaningful value.

- Predictable Sentence Patterns: Repetitive phrasing makes content easier to identify as automated. This weakens credibility and reduces reader engagement.

- Surface-Level Explanations: AI summarizes widely available information without adding specificity or depth. Readers leave when the content does not fully answer their questions.

- Weak Search Intent Alignment: Generic output fails to reflect the user’s actual goal. This disconnect reduces relevance and limits ranking potential.

- Lack of Contextual Awareness: AI struggles to prioritize what matters most to a specific audience. Content becomes broad instead of purposeful.

- Poor Engagement Signals: Low retention, shorter session duration, and higher bounce rates signal limited usefulness to search engines.

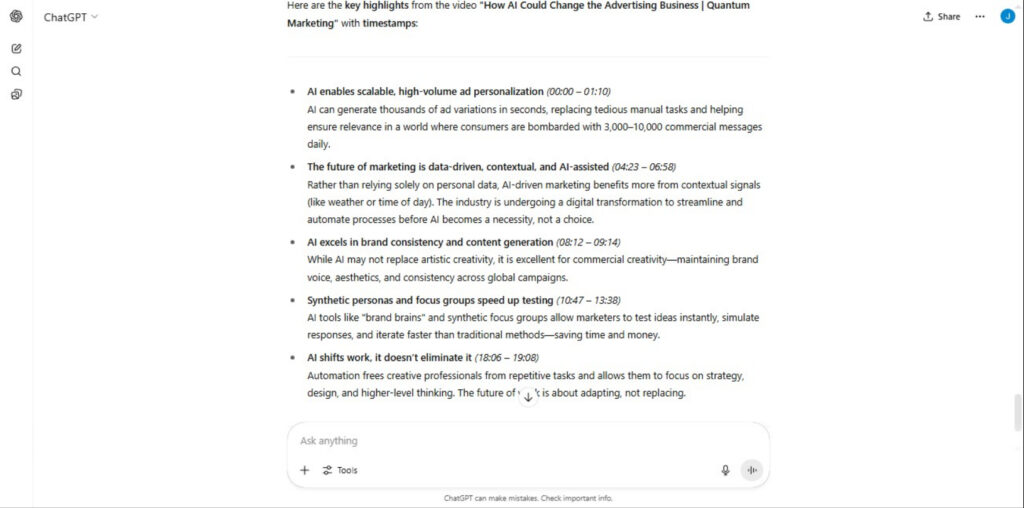

What Humanized AI Content Means In Modern SEO

Humanized AI content combines automation with deliberate human refinement. AI generates structure, accelerates research, and improves efficiency, but human editing ensures clarity, intent, and relevance. This process transforms raw output into content that communicates naturally and addresses real user needs. The goal is not to hide AI use but to humanize AI in a way that improves clarity, relevance and engagement.

Many creators revise AI drafts to improve flow, remove mechanical phrasing, and strengthen relevance. This refinement becomes especially important when adjusting tone, adding specificity, and ensuring the content can bypass AI detection while maintaining authenticity and search performance. Human input introduces judgment, prioritization, and context that automation alone cannot replicate.

Humanized AI content focuses on usefulness rather than volume. It anticipates reader questions, delivers clear answers, and maintains logical progression. This alignment helps search engines recognize the content as valuable and helps readers trust the information. As SEO shifts toward quality signals, humanized AI content provides the balance between efficiency and effectiveness.

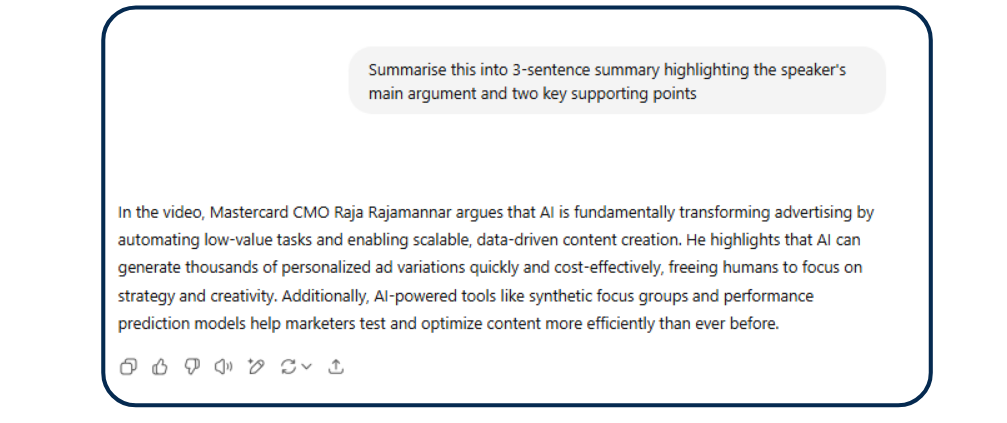

Why Search Engines Favor Humanized AI Content

Search engines evaluate how well content satisfies user intent. Humanized AI content performs better because it delivers clear answers, logical progression, and meaningful depth. Readers stay longer when content communicates naturally and addresses their specific concerns. Strong engagement signals indicate usefulness, which supports higher rankings and broader visibility.

Humanized content also reflects stronger semantic relevance. Human refinement ensures that topics connect logically, supporting comprehensive coverage rather than fragmented explanations. This structure helps search engines understand context, relationships, and authority. Content becomes easier to index and more competitive across related queries.

How Humanized AI Content Builds Trust And Authority

Readers recognize authenticity quickly. Humanized AI content communicates with clarity and purpose, which makes information easier to understand and apply. When content reflects real intent instead of generic phrasing, readers stay longer and explore more pages. This sustained engagement strengthens credibility and supports long-term visibility.

Authority grows when content consistently delivers useful, relevant insights. Human refinement ensures accurate prioritization, logical structure, and meaningful explanations. These qualities signal expertise to both readers and search engines. Over time, trustworthy content earns higher rankings, more repeat traffic, and greater influence in competitive search environments.

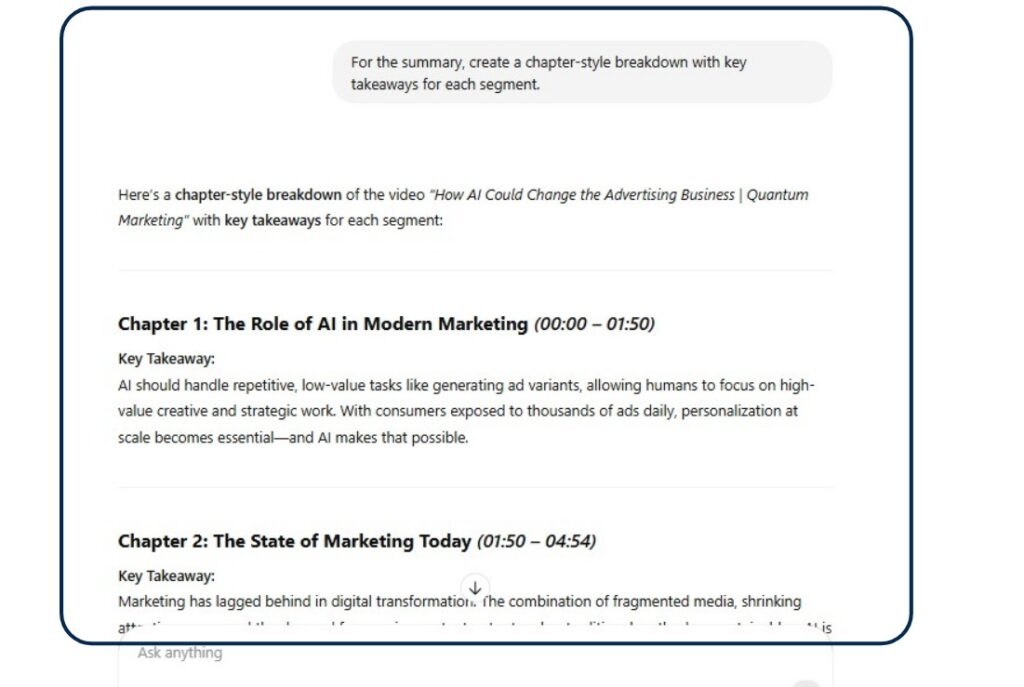

How Humanized AI Content Supports Scalable SEO Growth

Scalability depends on producing consistent, high-quality content without sacrificing relevance. Humanized AI content makes this possible by combining efficiency with editorial control. AI accelerates research and drafting, while human refinement ensures clarity, usefulness, and alignment with search intent. This balance allows teams to publish more content without lowering standards.

Humanized workflows also strengthen topical authority. Consistent quality helps search engines recognize expertise across related subjects. As more valuable content accumulates, rankings improve across entire keyword clusters instead of isolated pages.

Key Elements That Make AI Content Sound Human

Humanized AI content succeeds because it reflects deliberate choices in structure, tone, and clarity. Raw AI output often communicates efficiently but lacks nuance and intent. Human refinement introduces specificity, improves flow, and ensures content aligns with reader expectations.

- Natural Sentence Variation: Human editing breaks repetitive patterns and introduces varied rhythm. This makes content easier to read and more engaging.

- Contextual Specificity: Adding relevant examples and precise explanations improves clarity. Readers understand how information applies to real situations.

- Clear Logical Progression: Strong structure guides readers from one idea to the next. This improves comprehension and strengthens topical authority.

- Conversational but Purposeful Tone: Content communicates directly without sounding mechanical. This balance improves trust and readability.

- Audience-Focused Prioritization: Human refinement ensures content addresses what readers need most. This alignment improves relevance and engagement.

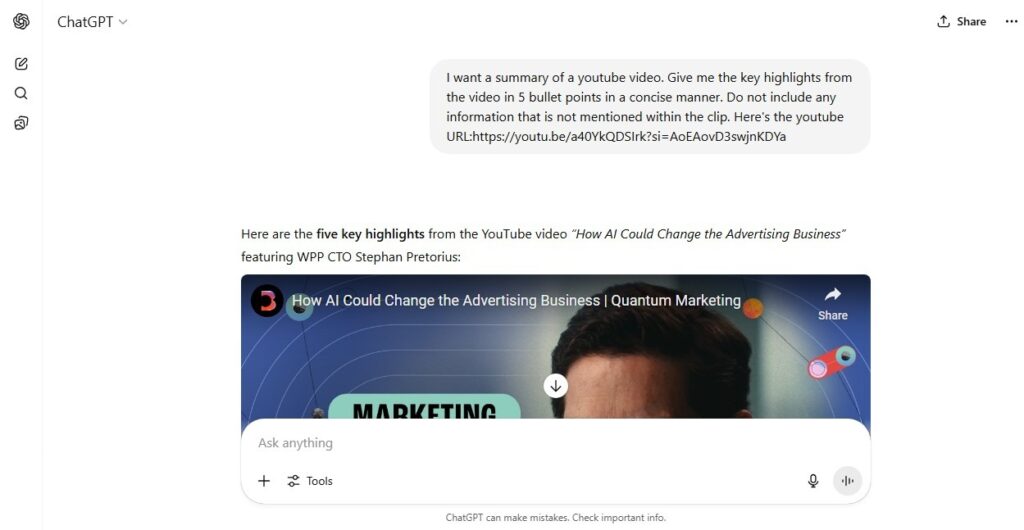

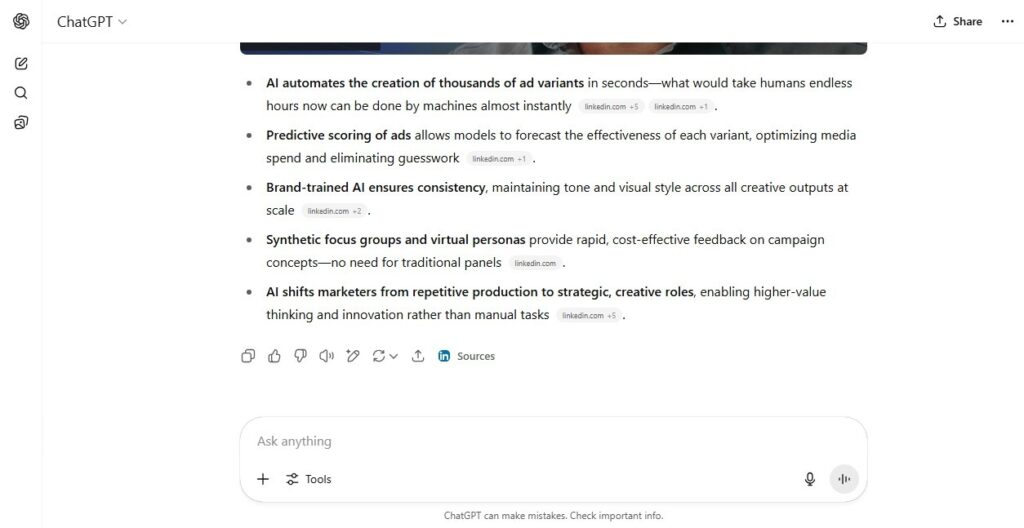

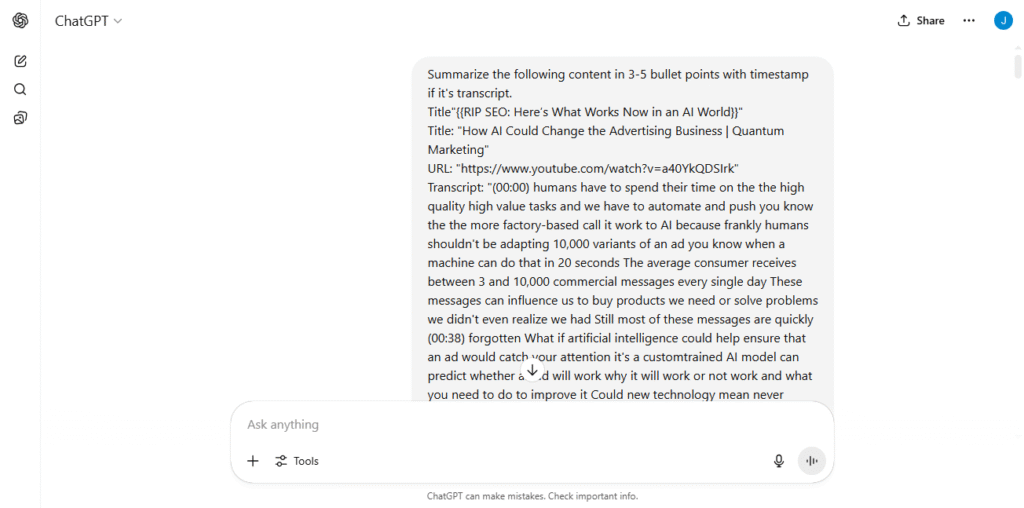

Step-By-Step Process To Create Humanized AI Content

Humanized AI content requires a structured workflow that combines automation with intentional human refinement. AI accelerates early stages, but human judgment ensures clarity, accuracy, and relevance. This process transforms raw output into content that aligns with search intent and reader expectations.

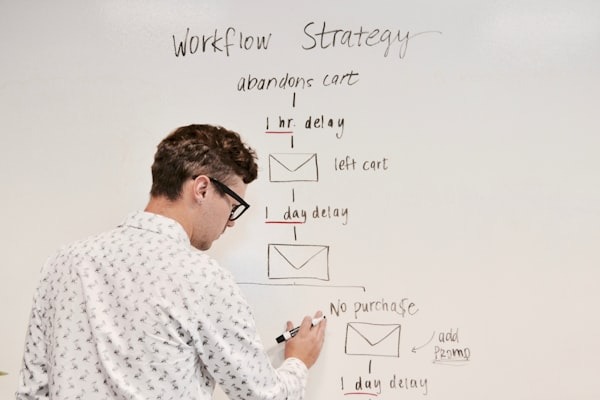

- Start with Strategic AI Drafting: Use AI to generate outlines and initial drafts quickly. Focus on structure and topic coverage rather than final quality.

- Refine Tone And Clarity: Edit sentences to improve flow, remove robotic phrasing, and ensure ideas connect logically. This step introduces natural readability.

- Add Unique Insight And Context: Include examples, explanations, and perspectives that AI cannot generate independently. This strengthens authority and usefulness.

- Align Content With Search Intent: Ensure each section answers real user questions clearly. Content must solve problems, not just present information.

- Perform Final Quality Review: Evaluate readability, coherence, and value. Confirm the content communicates naturally and supports SEO goals.

Wrapping Up

AI alone cannot win modern SEO. Success depends on how well content connects, informs, and earns trust. Humanized AI content delivers that advantage by combining efficiency with clarity and intent. Businesses that refine AI output create stronger authority, better engagement, and lasting visibility. The future of SEO belongs to those who make AI content genuinely useful and human.