Brands are getting mentioned and cited inside AI search and conversational platforms like ChatGPT, Google’s AI overviews, Google AI Mode, Claude, Perplexity and Claude. That makes it crucial for and other AI search tools, brands face a new challenge: LLM visibility.

This refers to how often and prominently your brand appears in AI-generated answers: from chatbot responses to AI summaries on search pages.

Users are increasingly trusting these AI answers, often more than traditional search results. In fact, studies show people tend to believe an AI’s response without cross-verifying, giving AI-generated answers more weight than even a #1 Google ranking.

If your competitors’ names show up in AI answers while yours don’t, you’re losing opportunities to win those customers. Traditional SEO metrics don’t reveal this gap, which is why marketers and founders need dedicated tools to optimize for AI visibility.

Optimizing for large language models (LLMs), sometimes called Generative Engine Optimization (GEO), means ensuring AI systems find, trust, and cite your content.

The good news is that many principles carry over from SEO (quality content, authority, structured data), but you’ll need new strategies and software to track and improve your presence in AI-driven search.

Below, we explore the best LLM optimization tools for AI visibility, covering content creation, SEO optimization, and brand mention tracking in AI.

Let’s tell you how they can help your brand stay visible in AI-generated results.

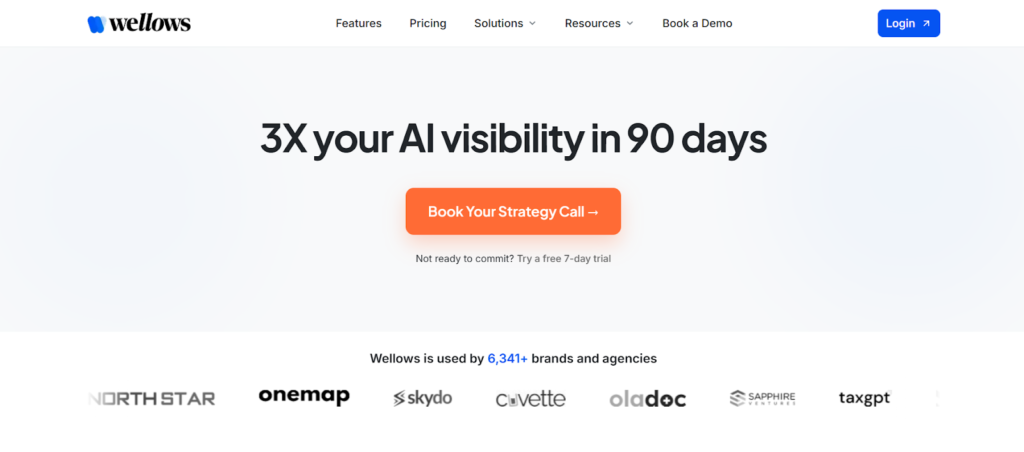

1. Wellows – AI Search Visibility & LLM Citation Tracking Platform

Wellows is anAI search visibility platform built to solve one of the biggest problems in modern search: brands being invisible inside AI-generated answers.

Wellows helps startups and agencies track, understand, and improve how they are interpreted, mentioned, and cited across AI systems like ChatGPT, Gemini, Perplexity, Google AI Overviews, and AI Mode. Instead of relying on outdated SEO signals, Wellows operates as a complete GenAI visibility stack designed for the era of generative search

Key Features

- AI Search Visibility Tracking

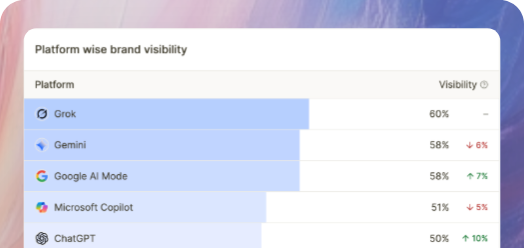

Provides a unified view of brand visibility across all major LLMs, eliminating fragmented platform-by-platform tracking - Visibility Score

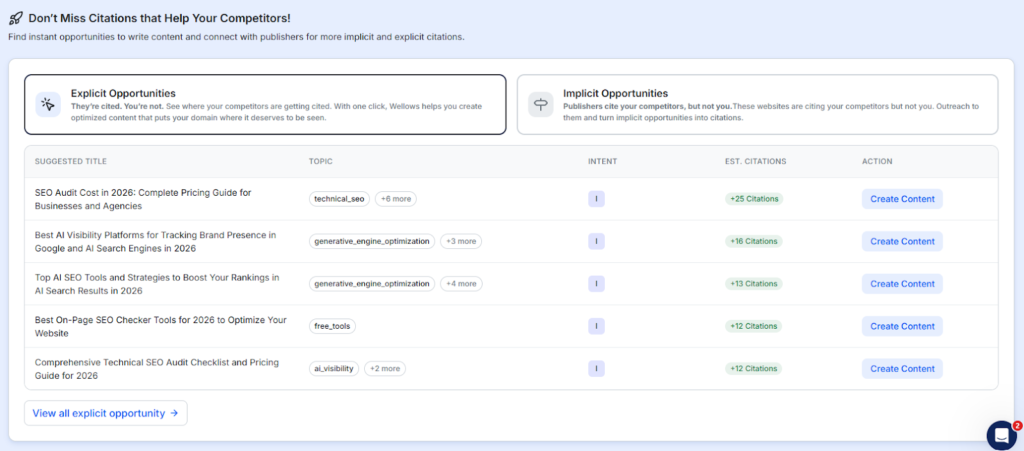

Quantifies overall AI presence across platforms, showing how visible your brand is in AI-generated answers compared to competitors — not just who gets mentioned, but who dominates. - Content Creation & Outreach Insights

Identifies visibility gaps and missed citation opportunities, then guides teams on what content to create and which publishers or sources to target to improve AI visibility. - Competitor AI Intelligence

Shows exactly which competitors are being cited, where they are winning, and how often they appear across AI-generated answers. - Daily Monitoring & Historical Trends

Tracks visibility shifts and long-term citation growth to measure progress in AI search - Action-Oriented Workflows

Turns visibility gaps into concrete actions through content optimization and publisher outreach to secure missed citations

Best For

- Brands struggling with AI invisibility while competitors dominate AI answers

- SEO and marketing teams transitioning to AI-first discovery

- Agencies offering AI visibility, GEO, and LLM optimization services

- Startups and SaaS companies aiming to build authority AI engines recognize

Why Wellows Stands Out:

Wellows isn’t just another monitoring tool — it functions as a full GenAI visibility stack. By combining AI search visibility tracking, competitive intelligence, implicit-to-explicit citation recovery, and a unified Visibility Score.

Wellows acts as the operating system for brands that want to own their presence in AI-generated search results, not just observe it.

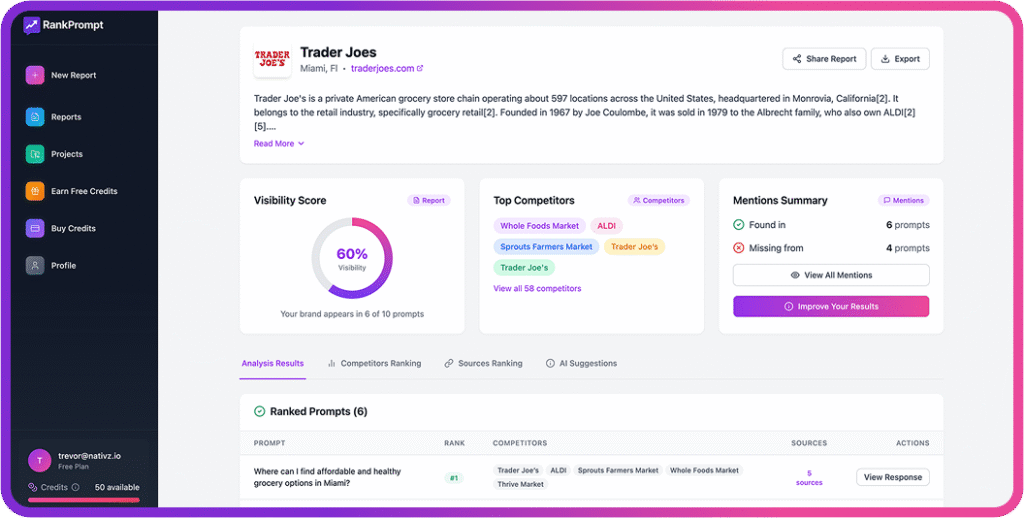

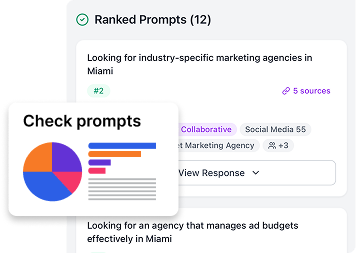

2. Rank Prompt: Specialized LLM Visibility Monitoring

If your goal is to specifically track and optimize brand mentions in AI answers, Rank Prompt is a leading solution.

It is a specialized LLM visibility tool built from the ground up to monitor how your brand appears across generative AI platforms.

Rank Prompt tracks your brand’s visibility across top LLMs and provides AI assistant comparison dashboards to see how you fare on different platforms. It can show you where and how your brand is appearing in AI conversations, and importantly, where it’s missing.

Just like Click Raven, the tool also offers competitor benchmarking, where it lets you identify gaps where rivals have gained a foothold in AI answers that you haven’t: valuable insight for adjusting your content strategy.

Beyond monitoring, Rank Prompt offers practical optimization suggestions to improve your AI presence. For instance, it might recommend adding structured data, better citations, or specific content tweaks if it detects areas where your content could be more “AI-friendly.”

Rank Prompt’s Reports are shareable, and dashboards are easy to understand, which is great for agencies or internal teams collaborating on LLM strategy.

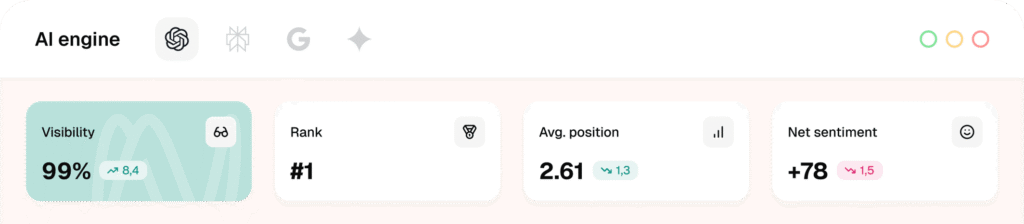

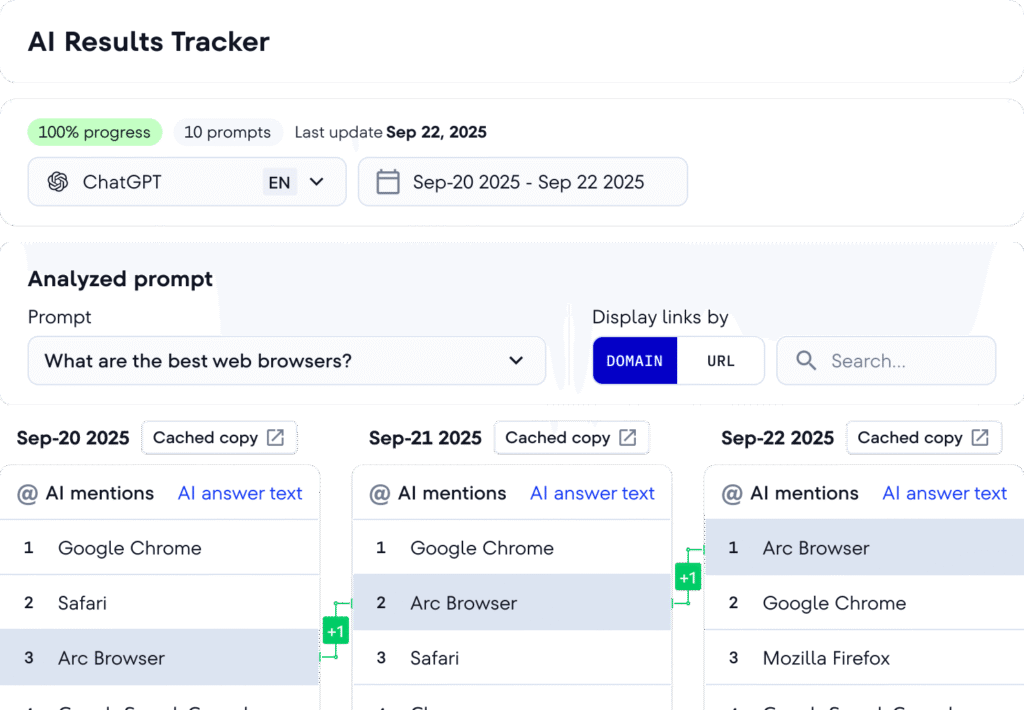

3. SE Ranking: AI Visibility Tracker in a Full SEO Suite

SE Ranking is a well-known SEO platform, and it has recently introduced an AI Visibility Tracker to help businesses monitor their presence in AI-generated search results. This option is ideal for marketers who want to integrate LLM visibility tracking into an existing SEO workflow.

SE Ranking’s tool watches Google’s AI overviews (SGE/AI snapshots), ChatGPT mentions, plus other AI engines like Claude, Perplexity, and Gemini.

Within SE Ranking’s dashboard, you can select target queries and see if they trigger AI answers that mention your brand or link to your site.

You’ll get details on how prominently you’re featured, for example, if your link is cited as a source, and which competitors appear in those answers when you don’t.

The tool updates daily, providing historical trends so you can track whether your AI visibility is improving or if you’ve lost ground on certain topics. This temporal view is critical, as you might discover, for instance, that a competitor’s new content has started getting cited by ChatGPT where you used to be mentioned.

SE Ranking also highlights the exact text of AI answers where your brand appears.

Reading these excerpts can help you understand the context: Are LLMs quoting you as an authority, or just mentioning your brand in passing? Are they using wording that aligns with your messaging? Such insights let you shape a stronger brand narrative and even refine your content’s tone or clarity to fit AI preferences better.

Additionally, because SE Ranking is a complete SEO suite, the AI visibility tracker sits alongside your keyword rankings, site audit, and backlink data. You’ll get a one-stop view of search performance in both traditional and AI realms.

4. Peec AI: Competitive Benchmarking for AI Search

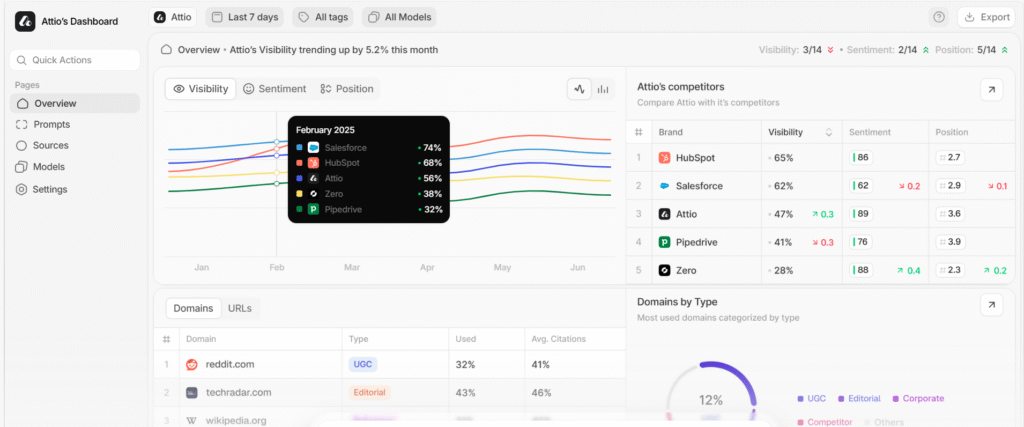

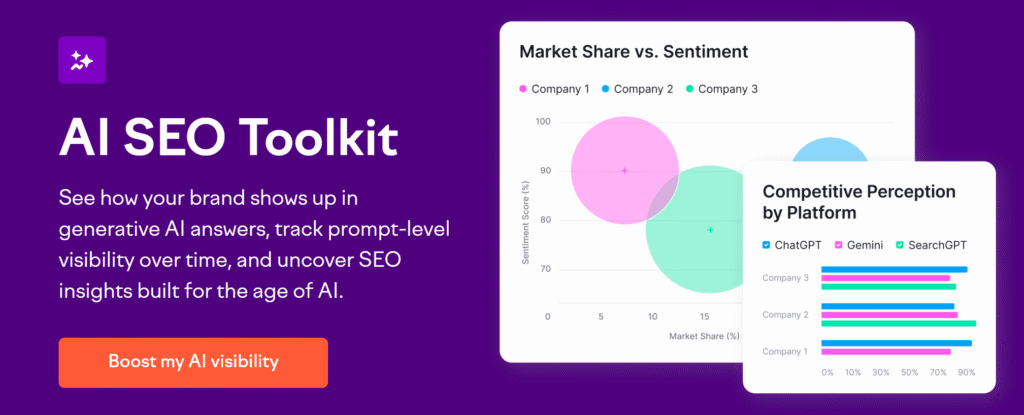

Peec AI takes a competitive intelligence angle on LLM optimization.

It’s designed to show you how often and in what context your brand is mentioned in AI answers relative to your competitors.

For marketers and founders concerned about market share and brand positioning, Peec provides a panoramic view of your category in the AI landscape.

Peec AI’s dashboard breaks down the frequency of mentions (essentially your brand’s share-of-voice) in various LLMs and compares it side by side with key competitors.

It doesn’t stop at raw counts; Peec also evaluates the sentiment and context of those mentions. Are you being cited as a positive example or mentioned in a negative context? This is important for brand reputation management in AI responses.

It even offers topic and entity analysis, helping you see which topics or keywords tend to surface your brand versus those that favor a competitor. This kind of insight can inform content strategy: if there are high-value topics where rivals dominate AI answers, you know where to focus your next content efforts.

Another strength is Peec’s emphasis on trend analysis over time. You can observe how AI mentions change month to month, which might correlate with your marketing campaigns or PR efforts. For instance, if you launched a campaign and see your AI mentions spike, that indicates success in capturing AI attention.

Peec’s reports often include content-level recommendations as well. So if you’re lagging behind a competitor on certain queries, the tool might suggest improving specific content or adding particular data that AI seems to prefer for that query.

5. Track My Visibility: AI Visibility Tracking & Optimization Platform

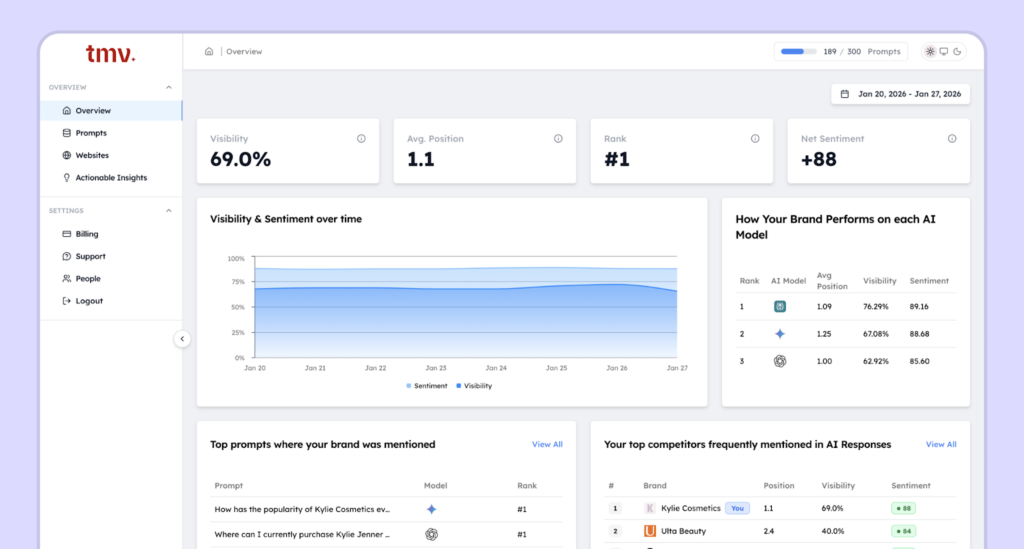

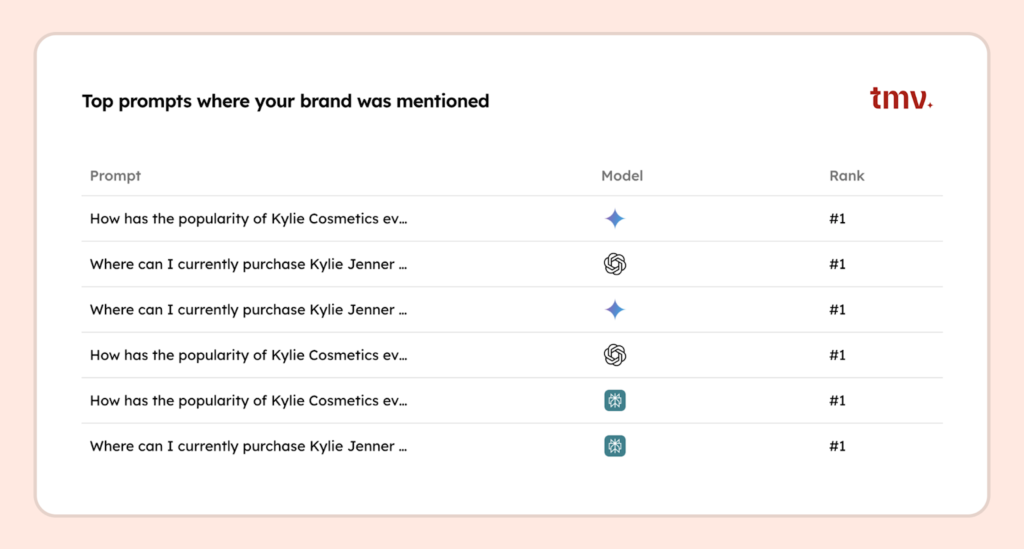

Track My Visibility (TMV) is a well-known AI search visibility platform that helps brands understand how they appear inside AI-generated answers.

As more users rely on tools like ChatGPT, Gemini, and Perplexity to discover products and services, brand visibility is no longer limited to traditional search engines. TMV shows whether your brand is mentioned or recommended in AI responses, and more importantly, explains why it may be missing.

The platform follows a simple three-step model. First, TMV measures AI visibility by tracking how often your brand appears across important prompts and how competitors are represented in the same answers. This gives teams a clear snapshot of their current AI presence.

Next, TMV diagnoses the gaps preventing AI systems from confidently recommending the brand. These gaps may come from technical access issues, weak content structure, or missing authority signals that AI models rely on when forming answers.

Finally, TMV provides clear guidance on what to fix. Each opportunity is connected to specific pages or signals so teams know exactly where to focus their efforts.

TMV does not replace SEO. It extends it into the AI layer, where brands are summarized, compared, and recommended directly inside answers.

6. Writesonic: AI Search Visibility for Content Teams

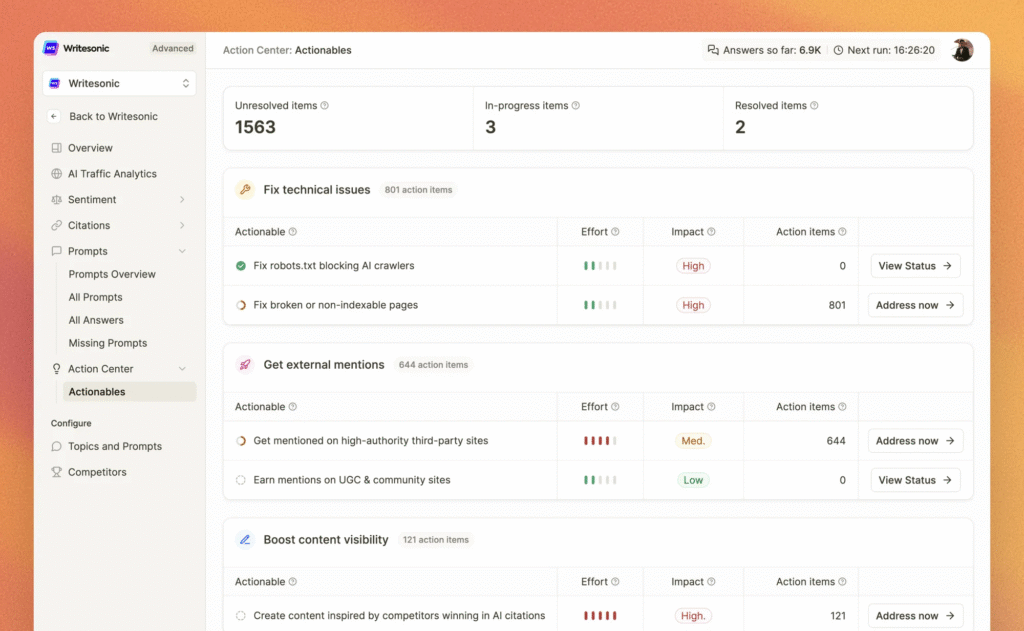

Writesonic is well-known for AI content generation, and it also offers an AI Search Visibility tool (GEO), essentially a brand monitor for LLMs built into its platform.

This tool is particularly appealing to content marketing teams and startups already using generative AI to produce content. It closes the loop between creating AI-driven content and measuring its impact on AI search visibility.

Writesonic’s AI visibility features will track where your AI-generated content appears in answers on ChatGPT, Claude, and other platforms. For example, if you use Writesonic to produce articles or web copy, the platform can help detect if those pieces are being cited or referenced by AI systems.

It effectively creates a feedback loop for content optimization. You generate content, monitor how it’s picked up in AI answers, and then tweak your content based on that performance data. This is immensely useful for content teams who might otherwise be “flying blind” regarding what AI does with their work.

Another advantage is integration. Since Writesonic is a content creation tool, the monitoring is built into the content workflow.

Marketers and writers can get suggestions within the platform on how to improve content that’s more likely to be cited by AI. For instance, the tool could recommend adding certain structured data, including up-to-date stats, or phrasing content to answer common questions directly.

7. Semrush LLM Dashboard: Bridging SEO and AI Search

Semrush, a giant in the SEO software space, has introduced an LLM Visibility Dashboard as part of its toolkit.

This is a big deal because many SEO teams worldwide already rely on Semrush, and now they can extend their analysis to AI-generated search results without leaving the platform.

The LLM Dashboard in Semrush allows users to tie their existing SEO data (keywords, rankings, etc.) to AI visibility.

For example, you can see which of your high-value Google keywords now trigger AI answers on the search results page and whether your site is included in those AI answers or not.

It effectively overlays an “AI layer” on top of your normal SEO tracking. You’ll get reports on branded queries in AI tools (does ChatGPT mention you for Query X?) and even some content optimization suggestions specifically aimed at improving AI citations.

Because it’s part of Semrush, it integrates with other modules like keyword research and site audit. With this in mind, you might get holistic recommendations (e.g., improve this page’s content depth, and you might rank better on Google and be more likely to be cited by Gemini’s AI).

Another plus is collaboration and reporting: Semrush is well-established for reporting. You likely can generate white-label reports or custom dashboards that include AI visibility metrics alongside traditional SEO KPIs.

That said, because Semrush’s solution is an add-on to a general SEO suite, it may not be as specialized or granular in AI features as tools like Click Raven. It currently might lack some advanced insights (like detailed sentiment analysis or multi-model nuances)

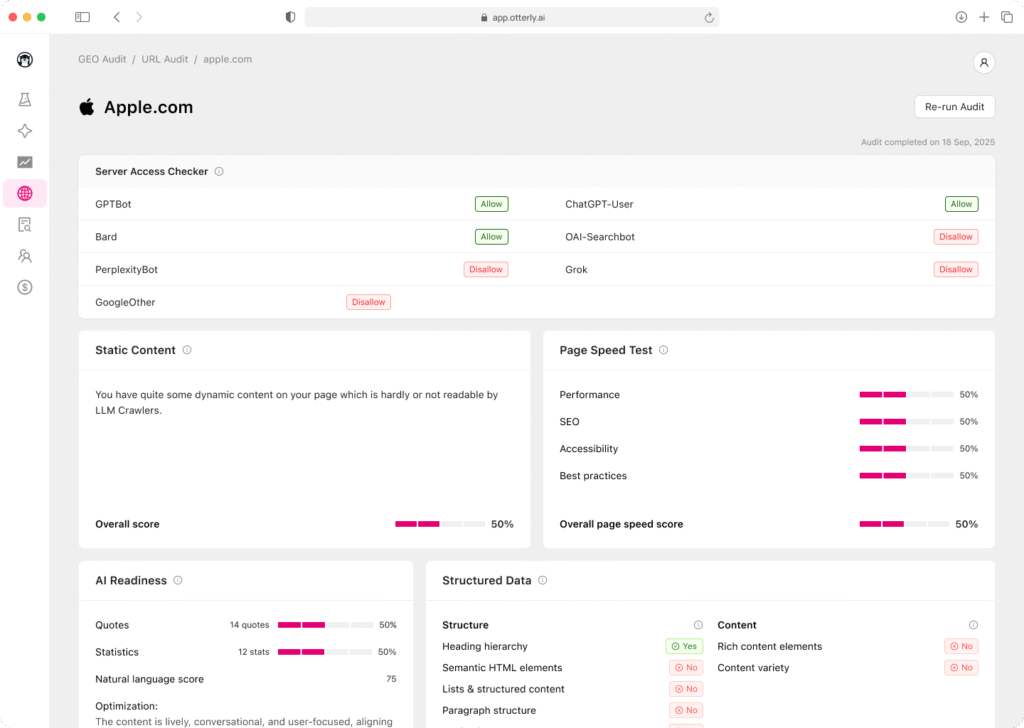

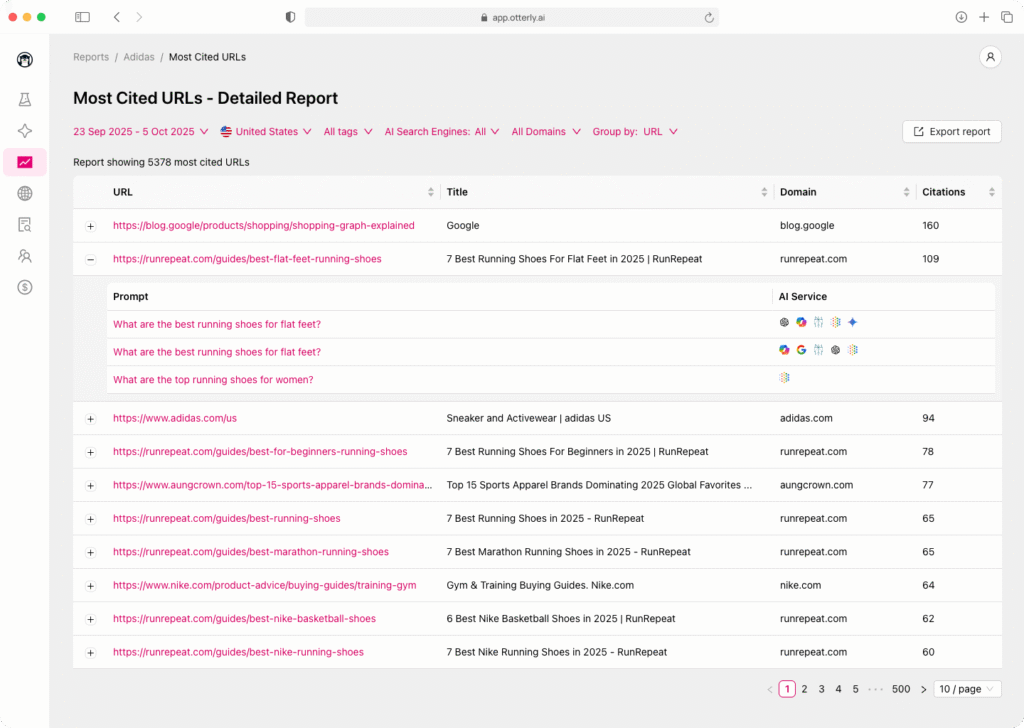

8. Otterly AI: Enterprise-Grade LLM Visibility

For large organizations with complex needs, Otterly AI is often cited as a top enterprise LLM visibility platform.

Otterly is built to monitor and optimize brand presence in AI answers at scale: across multiple markets, product lines, and even compliance regimes.

Otterly offers sophisticated cross-market tracking.

It can segment AI visibility data by region, product, or business unit, which is important for enterprises managing many brands or locales. You’ll get dashboards that aggregate how your brand (or specific sub-brands) are performing in AI search across different geographies.

It also provides insights into brand narrative consistency, flagging if an AI in one region portrays your brand differently than in another. This ties into compliance: Otterly can help ensure that AI platforms are reflecting the correct, compliant information about your brand in different markets (critical for industries like healthcare or finance).

Another hallmark is integration: Otterly offers direct integration with your CMS and analytics platforms. This means it can feed recommendations or data straight into your content management workflow or pull in conversion data to see if AI-referred visitors are taking action on your site.

Its reports include visibility gap analysis, pointing out where you have content or PR blind spots that are causing you to miss out on AI mentions.

For example, if your competitor always shows up for AI queries about a certain topic and you don’t, Otterly will surface that, and might recommend creating content or doing a campaign to fill that gap.

Conclusion: Embracing AI Visibility Tools in Your Strategy

As AI-driven search continues to grow, LLM visibility is becoming a critical metric for marketers and founders. It’s no longer enough to rank on page one of Google; you also need to rank as a trusted answer in ChatGPT, Bard, Claude, and the next generation of AI assistants.

The tools we’ve recommended today represent the currently available solutions at the forefront of this emerging field. They’re leading LLM optimizers that can help you measure where you stand, discover content opportunities, and take action to improve your AI search presence.

As you choose your best LLM optimization tools, remember that succeeding with AI visibility comes down to a blend of quality content and strategic insight.

You need to create authoritative and quote-worthy content (just as traditional SEO demands). Then, you can use these new tools to ensure that content is recognized and cited by the AI algorithms shaping consumer attention.

Ready to boost your AI visibility today with a straightforward, affordable, and very effective platform? You can sign up for Click Raven today and track your brand in AI answers now.